Kup StethoMe® w planie Family.

Sprawdzaj stan zdrowia swojej rodziny

inteligentnym stetoskopem

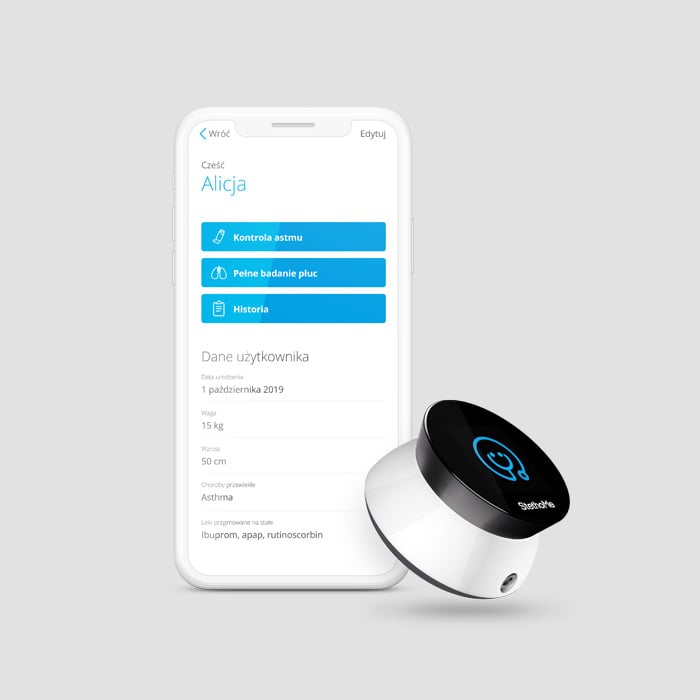

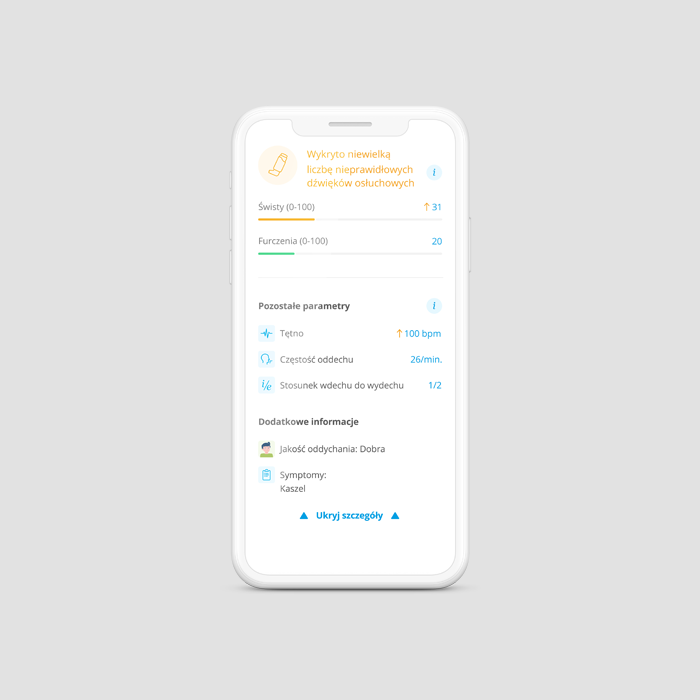

StethoMe® wykrywa i analizuje nieprawidłowe dźwięki w układzie oddechowym. Pozwala osłuchać płuca w warunkach domowych i otrzymać natychmiastowy, wynik badania, który można przesłać do lekarza, by podjąć szybką reakcję.

+ darmowy dostęp do aplikacji na okres 12 miesięcy

+ darmowa wysyłka

po okresie 12 miesięcy dostęp do aplikacji wynosi 14,99 zł miesięcznie

Z pomocą inteligentnego stetoskopu StethoMe®:

Chcesz przetestować? Wypożycz StethoMe® na 3 miesiące

+ darmowy dostęp do aplikacji na okres wypożyczenia

wypożyczenie przedłuża się automatycznie co 3 miesiące

po wypożyczeniu wykupisz stetoskop po cenie, z której odejmiemy koszt wypożyczenia

Możesz bezpłatnie zrezygnować ze StethoMe w ciągu 14 dni od opłacenia zamówienia. W tym celu wyślij informację o chęci odstąpienia od umowy na adres support@stethome.com oraz odeślij nam urządzenie. Później także możesz anulować subskrypcję w dowolnym momencie. Pamiętaj jednak, że wtedy nie otrzymasz zwrotu za niewykorzystane dni wykupionej subskrypcji StethoMe.

W planie StethoMe Family możesz dodać do 4 pacjentów i masz dostęp do szczegółów historii z okresu 3 miesięcy. W planie StethoMe Plus (dawniej StethoMe Astma) masz dostęp do trybu kontroli świstów dla chorób przewlekłych, możesz dodać do 6 pacjentów oraz masz dostęp do szczegółów historii z okresu 24 miesięcy.

Przy zakupie otrzymasz 24 miesiące gwarancji. Przy wypożyczeniu otrzymasz gwarancję na urządzenie przez cały okres wypożyczenia.

Aplikacja StethoMe jest dostępna zarówno na platformie Android, jak i iOS. Wspierane wersje oprogramowania to: Android 9 (i wyższe) oraz iOS 15 (i wyższe).

Elektroniczne stetoskopy StethoMe® zamawiane w formie wypożyczenia są częścią programu StethoMe® Loop, gdzie używane stetoskopy po przejściu procedury weryfikacji, serwisu i dezynfekcji wysyłane są do nowych domów z zachowaniem najwyższych standardów bezpieczeństwa i higieny. Stetoskopy kupowane na własność są fabrycznie nowe.